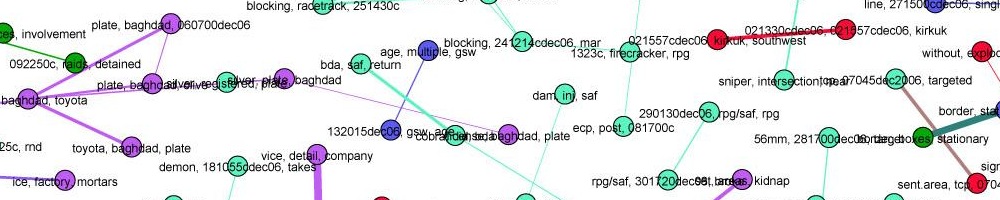

In previous weeks we discussed filters that are purely algorithmic (such as NewsBlaster) and filters that are purely social (such as Twitter.) This week we discussed how to create a filtering system that uses both social interactions and algorithmic components.

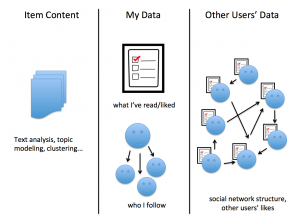

Here are all the sources of information such an algorithm can draw on.

We looked at two concrete examples of hybrid filtering. First, the Reddit comment ranking algorithm, which takes the users’ upvotes and downvotes and sorts not just by the proporition of upvotes, but by how certain we are about proportion, given the number of people who have actually voted so far. Then we looked at item-based collaborative filtering, which is one of several classic techniques based on a matrix of users-item ratings. Such algorithms power everything from Amazon’s “users who bought A also bought B” to Netflix movie recommendations to Google News’ personalization system.

Evaluating the performance of such systems is a major challenge. We need some metric, but not all problems have an obvious way to measure whether we’re doing well. There are many options. Business goals — revenue, time on site, engagement — are generally much easier to measure than editorial goals.

The readings for this week were:

- Item-Based Collaborative Filtering Recommendation Algorithms, Sarwar et. al

- How Reddit Ranking Algorithms Work, Amir Salihefendic

- Google News Personalization: Scalable Online Collaborative Filtering, Das et al

- Slashdot Moderation, Rob Malda

- Pay attention to what Nick Denton is doing with comments, Clay Shirky

- How does Google use human raters in web search?, Matt Cutts

This concludes our work on filtering systems — except for Assignment 2.