This assignment is designed to help you develop a feel for the way topic modeling works, the connection to the human meanings of documents, and one way of handling the time dimension. First you will analyze a corpus of AP news articles. Then you’ll look at the State of the Union speeches, and report on how the subjects have shifted over time in relation to historical events.

Note: This assignment requires reading the documents, not just running algorithms on them. I am asking you to tell me how well these algorithms capture the meaning of the documents, and you can only determine meaning by reading the documents. So when you see a word scored highly within a document set, go read the documents that contain that word. When a topic ranks high for a document, go read the documents that contain that topic.

1. Load the data. To begin with you’ll try LDA on a homogeneous document set of short clean articles: this collection of AP wire stories. Get this CSV loaded as a list of strings, one per document.

2) Generate TF-IDF vectors. Use the gensim package to generate tf-idf weighted document vectors. Check out the gensim example code here. You will need to go through the file twice: once to generate the dictionary (the code snippet starting with “collect statistics about all tokens”) and then again to convert each document to what gensim calls the bag-of-words representation, which is un-normalized term frequency (the code snippet starting with “class MyCorpus(object)”

Note that there is implicitly another step here, which is to tokenize the document text into individual word features — not as straightforward in practice as it seems at first, but the example code just does the simplest, stupidest thing, which is to lowercase the string and split on spaces. You may want to use a better stopword list, such as this one.

Once you have your Corpus object, tell gensim to generate tf-idf scores for you like so.

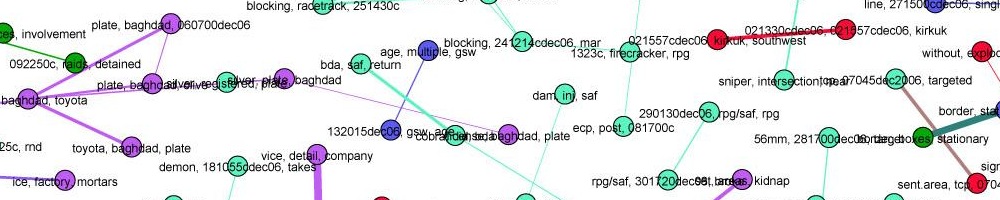

3) Do LSI topic modeling. You can apply LSI to the tf-idf vectors, like so. You will have to supply the number of topics to generate. Figuring out a good number is part of the assignment. Print out the resulting topics, each topic as a lists of word coefficients. Now, sample ten topics randomly (not the first ten, a random ten!) from your set for closer analysis. Try to annotate each of these ten topics with a short descriptive name or phrase that captures what it is “about.” You will have to refer to the original documents that contain high proportions of that topic, and you will likely find that some topics have no clear concept.

Turn in: your annotated topics plus a comment on how well you feel each “topic” captured a real human concept.

4) Now do LDA topic modeling. Repeat the exercise of step 3 but with LDA instead, again trying to annotate ten randomly sampled topics. What is different? Did it better capture the meaning of the documents? If so, “better” how?

Turn in: your annotated topics plus a comment on how LDA differed from LSI.

5) Now apply LDA to the State of the Union. Download the source data file state-of-the-union.csv. This is a standard CSV file with one speech per row. There are two columns: the year of the speech, and the text of the speech. You will write a Python program that reads this file and turns it into TF-IDF document vectors, then prints out some information. Here is how to read a CSV in Python. You may need to add the line

csv.field_size_limit(1000000000)

to the top of your program to be able to read this large file. Also, this file is probably too big to open in Excel or to read with Pandas.

The file is a csv with columns year, text. Note: there are some years where there was more than one speech! Design your data structures accordingly.

6) Determine how speeches have changed over the 20th century. We’ll use a very simple algorithm:

- Generate TF-IDF vectors for the entire corpus

- Group speeches by decade

- Sum TF-IDF vectors for all speeches in each decade to get one “summary” vector per decade

- Print out top 10 most highly ranked words in each decade vector

Turn in: an analysis of how the topics of the State of the Union have changed over the decades of the 20th century. What patterns do you see? Can you connect the terms to major historical events? (wars, the great depression, assassinations, the civil rights movement, Watergate…)