This week we looked how groups of people can act as information filters.

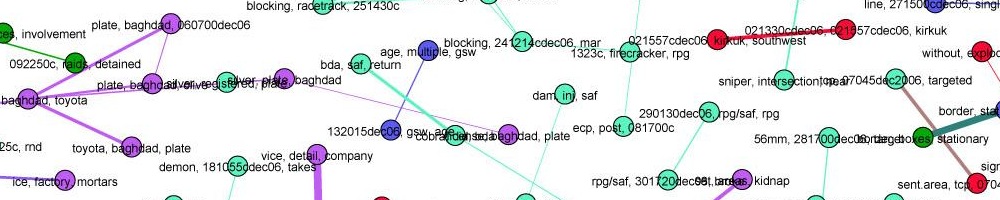

First we studied Diakopolous’ SRSR (“seriously rapid source review”) system for finding sources on Twitter. There were a few clever bits of machine learning in there, for classifying source types (journalist/blogger, organization, or ordinary individual) and for identifying eyewitnesses. But mostly the system is useful because it presents many different “cues” to the journalist to help them determine whether a source is interesting and/or trustworthy. Useful, but when we look at how this fits into the broader process of social media sourcing — in particular how it fits into the Associated Press’ verification process — it’s clear that current software only adresses part of this complex process. This isn’t a machine learning problem, it’s a user interface and workflow design issue. (For more on social media verification practices, see for example the BBC’s “UGC hub“)

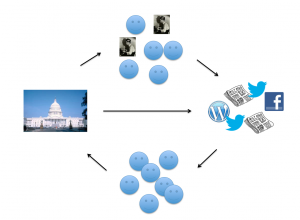

More broadly, journalism now involves users informing each other, and institutions or other authorities communicating directly. The model of journalism we looked at last week, which put reporters at the center of the loop, is simply wrong. A more complete picture includes users and institutions as publishers.

That horizontal arrow of institutions producing their own broadcast media is such a new part of the journalism ecosystem, and so disruptive, that the phenomenon has its own name: “sources go direct,” which seems to have been originally coined by blogging pioneer Dave Winer.

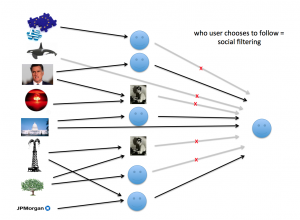

But this picture does not include filtering. There are thousands — no, millions — of sources we could tune into now, but we only direct attention to a narrow set of them, maybe including some journalists or news publications, but probably mostly other types of source, including some primary sources.

This is social filtering. By choosing who we follow, we determine what information reaches us. Twitter in particular does this very well, and we looked at how the Twitter network topology doesn’t look like a human social network, but is more tuned for news distribution.

There are no algorithms involved here… except of course for the code that lets people publish and share things. But the effect here isn’t primarily algorithmic. Instead, it’s about how people operate in groups. This gets us into the concept of “social software,” which is a new interdisciplinary field with its own dynamics. We used the metaphor of “software as architecture,” suggested by Joel Spolsky, to think about how software influences behavior.

As an example of how environment influences behaviour, we watched this video which shows how to get people to take the stairs.

I argued that there are three forces which we can use to shape behavior in social software: norms, laws, and code. This implies that we have to write the code to be “anthropologically correct,” as Spolsky put it, but it also means that the code alone is not enough. This is something Spolsky observed as StackOverflow has become a network of Q&A sites on everything from statistics to cooking: each site has its own community and its own culture.

Previously we phrased the filter design problem in two ways: as a relevance function, and as a set of design criteria. When we use social filtering, there’s no relevance function deciding what we see. But we still have our design criteria, which tell us what type of filter we would like, and we can try to build systems that help people work together to produce this filtering. And along with this, we can imagine norms — habits, best practices, etiquette — that help this process along, an idea more thoroughly explored by Dan Gilmour in We The Media.

The readings from the syllabus were,

- A Group is its own worst enemy, Clay Shirky

- What’s the point of social news?, Jonathan Stray

- Finding and Assessing Social Information Sources in the Context of Journalism, Nick Diakopolous et al.

- Learning from Stackoverflow, first fifteen minutes, Joel Spolsky

- Norms, Laws, and Code, Jonathan Stray

- What is Twitter, a Social Network or a News Media?, Haewoon Kwak, et al,

- International reporting in the age of participatory media, Ethan Zuckerman

- We The Media. Introduction and Chapter 1, Dan Gillmor